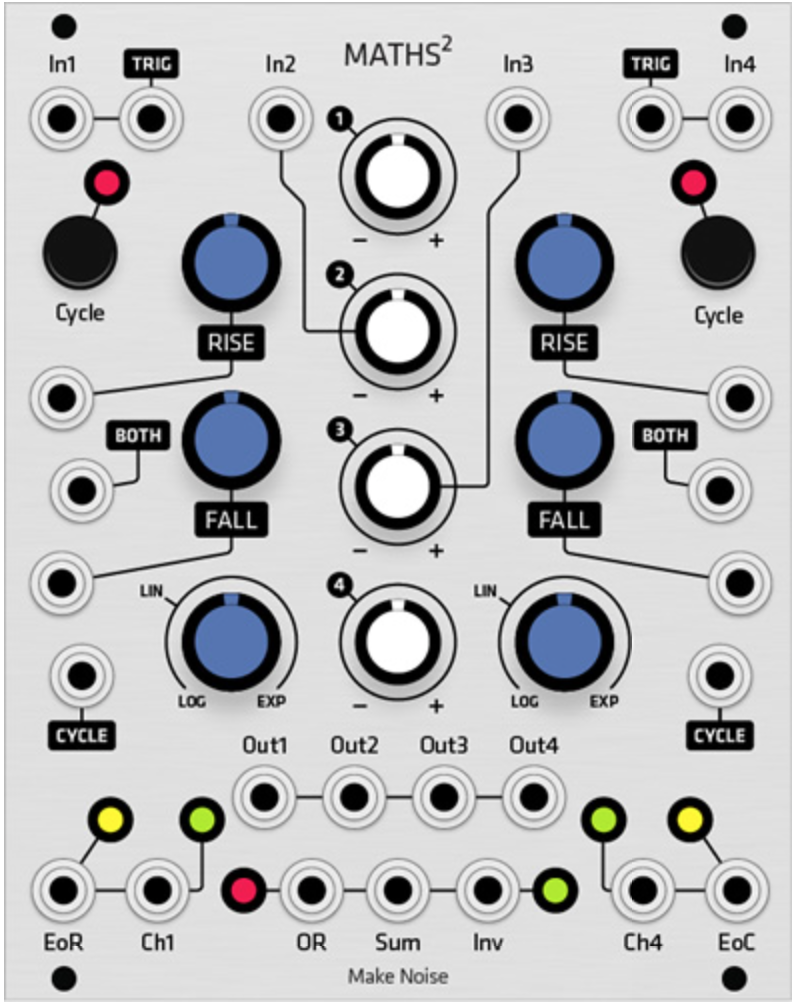

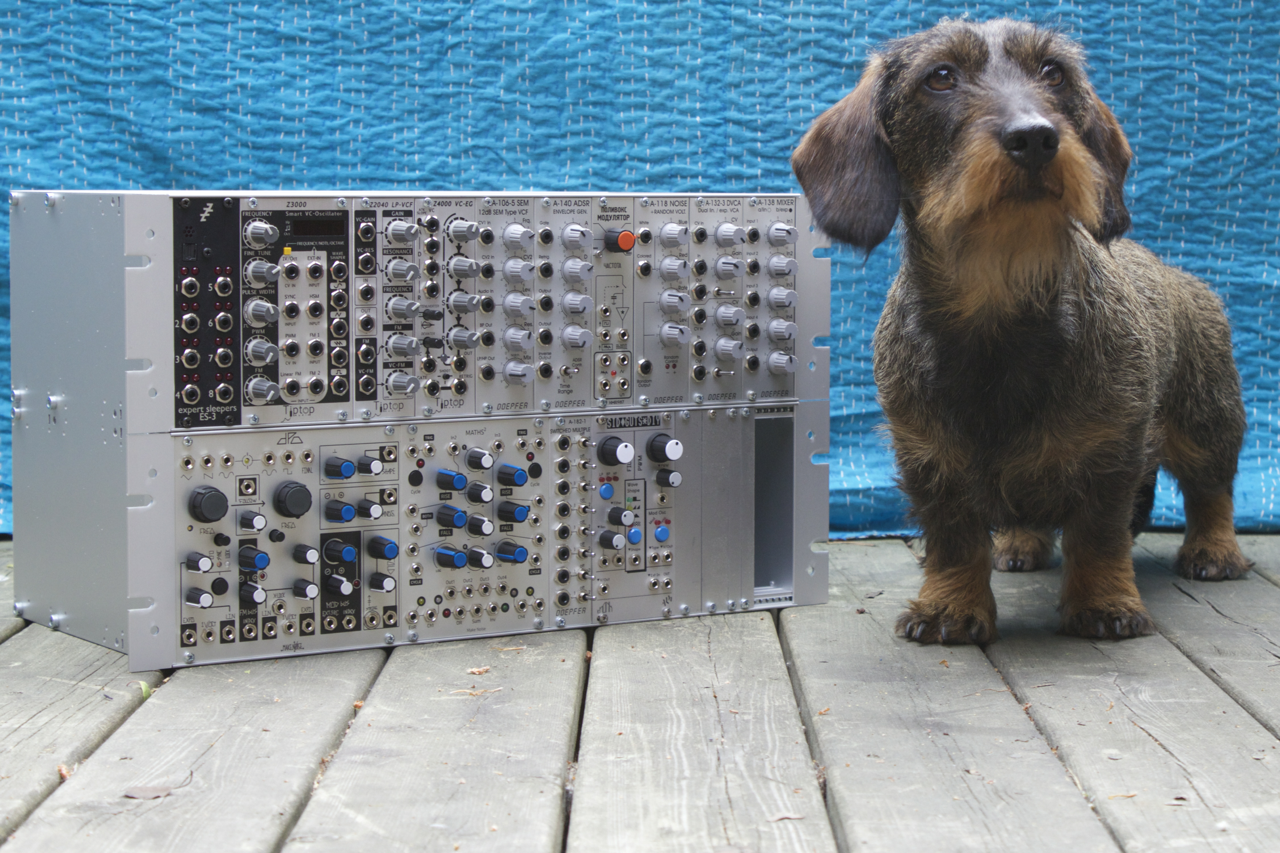

At one point in my life I found myself working for a company that made synthesisers of the musical instrument kind. The guts of the synths consisted of a bunch of DSPs that did all the audio processing, some memory, and a general purpose CPU that handled the rest: scanning the keyboard, scanning the knobs and buttons on the control panel and providing MIDI and USB connectivity.

One of my jobs was to clean out a list of bugs that users had reported on a released product. Most of these bugs were minor and sometimes obscure but the company prided itself on high quality so they would strive to fix all reported bugs, no matter how minor. Unless the bug report was that “the product should cost 50% less, be all-analogue, and be able to transport me to my place of work”. Some people have strong feelings of entitlement.

One of the bugs on my list was that the delay glitched if you turned it off and on again quickly. A delay is a type of audio effect that simulates an echo: if you send a sound into it then the sound will be repeated some number of times while fading out. Early delays were implemented using magnetic tape. If the recording head was placed some distance ahead of the playback head then a sound that was recorded into the delay unit would play back as an echo a short while later as the tape passed by the playback head. In this particular case the delay was digital and consisted of a circular buffer of audio samples that the DSP would continuously feed data into (mixed together with feedback from audio that was already in the buffer). The buffer would then be played back by being mixed with the main audio output signal.

The delay had an on/off button on the front panel of the synth and when you switched it off the DSP would set the input volume to the delay buffer to 0 so that it would fill up with silent samples. However, since this happened at the normal audio rate it would take a few seconds before the whole buffer was filled with zeros. A user had discovered that if you played something with the delay enabled, then switched it off and then quickly switched it on again then parts of the delay buffer would still contain sound that you would hear, sometimes with nasty transients. The solution was to program the DSP to quickly zero the delay buffer using a DMA transfer whenever the delay was turned off.

This may sound trivial but the code running on the DSP was hand-coded assembly optimised to within an inch of its life. The DSP had one job: to present a pair of completely processed 24-bit samples — a stereo signal — to the Digital-Analogue converter inputs at the sample rate, which was 44100 times per second. If a sample wasn’t ready in time then digital noise would result at the output. This was frowned upon because it would sound horrible and if it happened while the instrument was being played on stage, through a serious sound system, you might as well poke out the eardrums of your audience using an ice pick. This made the rules of the game for the DSP pretty simple: if it could execute one instruction per cycle and was running at F cycles per second then that meant that it could spend N=F/(2*44100) instructions per sample. In fact it had to spend exactly N instructions or the timing would drift off. Any unused cycles had to be filled in with “No-op” instructions that do nothing but waste time. In this case N was a couple of hundred cycles. This meant that the DSP code was a couple of hundred instructions long, which was good because there was not so much of it, but bad because there were very few unused cycles left in which to set up the DMA.

This type of DSP is built to do one thing: to perform arithmetic on 24-bit fixed point numbers. It is a multiply-add engine. Multiplying and offsetting 24-bit fixed point numbers is easy and everything else is a pain in the upholstery. Instructions are often very limited as to which registers they can operate on and the data you want to operate on is therefore, as per Murphy’s law, always in the wrong type of register.

After scrounging up some spare cycles I managed to set up a DMA that zeroed the delay buffer whenever it was turned off. Apparently. I tested it: no problem. I told my boss and he came in and listened. Now, my boss had worked with theses synths for years and years and he immediately heard what I didn’t: a diminutive “click” sound that was so weak that I couldn’t hear it at all. “Probably you didn’t turn off the input to the delay buffer so a couple of samples gets in there while the DMA is running.” I verified it but no, the input was properly turned off.

Now that I knew what to listen for I could hear the click if I put on headphones and turned the volume up. Headphones are always scary when programming audio applications because if you screwed up the code somewhere you might very well suddenly get random data at the DACs which means very loud digital noise in the headphones which means broken eardrums and soiled pants in the programmer. In contrast, a single sample of random data at 44.1kHz sounds a little bit like a hair being displaced close to your ear. In the beginning I had to stop breathing and sit absolutely still to hear the click noise. Moving the head just a little would make mechanical noise as the headphones resettled, noise that would drown out the click. After a while though, my brain became better at picking out the clicks, after a day or so I could hear it through loudspeakers. Unfortunately I soon started to hear single-sample clicks everywhere…

So what was going on? Was the delay buffer size off by one? No. Was the playback pointer getting clobbered? No. Did the DMA do anything at all? Yes, by filling the buffer with a non-zero value and then running the DMA I could see that the buffer was zeroed afterwards. Was it some sort of timing glitch that caused a bad value to appear at the DACs? Not that I could tell. Blaming the compiler is always an option but in this case the code was hand-written assembly so that alternative turned out to be less satisfying. A compiler can’t defend itself but your co-worker can…

The debugging environment was pretty basic. The only tool available was a home-grown debugger that could display something like 8 words of memory together with the register contents and not much else. Looking through a megabyte or so of delay buffer data through an 8 word window might sound like fun but I guess I’m easily bored…

One thing that became apparent after a while was that the click sound would appear even when the delay buffer was empty to begin with. This indicated that the bad data was put there instead of being left behind. At this point I started to throw things out of the code. I find this to be a pretty good way forward if a bug checks your early advances. Remove stuff until the bug goes away, then you can start to add stuff back again. After a while I had just the delay code left. I wrote a routine that filled the delay buffer with a known value, ran the DMA, and then stepped through the whole buffer, verifying the contents word-by-word. And bingo, it found a bad sample.

Seeing it made it real. As long as I only heard it, the defect could theoretically have been introduced somewhere later in the processing or in the output chain. But now I could see the offending sample through my tiny window, sitting there in the middle of a sea of zeros. What is more, the bad sample did not contain the known value that the delay buffer was initialised with. At this point suspicions were raised against the DMA itself. A quick look through the DSP manual revealed an errata list on the DMA block several pages long. This DSP had a horrendously complex DMA engine that could do all sorts of things. For example, it could copy a buffer to a destination using only odd or even destination addresses — in other words it could copy a flat buffer into to a single channel of a stereo pair. It seemed like half of these modes didn’t really work.

None of the issues listed in the errata list fit what I was seeing but I still eyed the DMA engine suspiciously. I therefore tried to zero the delay buffer using a different DMA mode and it worked! Ah, hardware: can’t love it, can’t kill it. Hardware designers on the other hand…

So the bug was put to rest and I moved on to the next issue on my list. Did we learn something? When you can’t blame the compiler, blaming hardware remains an option. In the end I came away pretty impressed by the dedication to quality and stability that this small company displayed. The original bug report was on a glitch that many companies wouldn’t bother to correct at all. The initial fix took maybe half a day to implement and took the amount of unwanted data in the delay from 1-2 seconds (between 90000 and 180000 samples) down to just a single, barely audible, sample in what was already a rare corner case. Fixing it completely took about a week. In other words, it took four hours to fix 99.99999% of the bug and 36 hours to fix the rest of it. But the message was pretty clear : fix it and fix it right.

And to all the semiconductor companies out there: don’t let your intern design the DMA engine.